In the 1950s, engineers faced a challenge. The parts they were using to wire computers – namely transistors - were too bulky for their plans to build more powerful machines. In response, they did something remarkable: they showed that it was possible to greatly shrink a computer’s main circuitry by etching, or chemically burning, the transistors onto tiny chips of silicon. Since then manufacturers have used the same basic process to cram many more circuits onto tinier chips that, ultimately, have powered today’s smartphones, PCs, and the internet.

In a recent article published in Science Translational Medicine, a team of NIH BRAIN Initiative®-funded researchers showed how this chip manufacturing process may also help neuroscientists overcome similar challenges they face today in recording brain wave activity.

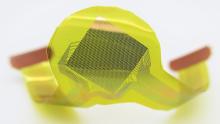

Led by Jonathan Viventi, Ph.D., an assistant professor at Duke University, John A. Rogers, S.M., Ph.D., director of the Center on Bio-Integrated Electronics at Northwestern University, and Bijan Pesaran, Ph.D., professor of Neural Science at New York University, the team described how they made the Neural Matrix, a thinner-than-hair, flexible electrocorticography device that has the potential to record brain activity with higher fidelity and for longer periods than existing devices.

Originally described in the 1950s, electrocorticography is a method for recording electrical activity that involves the surgical implantation of a grid of metal electrodes on the surface of the brain. The electrodes rely on faradic sensing, or changes in the brain’s ion, or salt, levels resulting from neural activity. Over the years, the technique has been used to map out seizures in patients who have drug-resistant epilepsy as well as helping scientists investigate the inner workings of the brain, such as how it controls speech or learning and memory. But researchers would like to have a system that detects finer changes in activity, over greater areas and for longer times. In the new study, the researchers showed how this may be possible.

To do this, they redesigned the circuitry of an electrocorticography device in such a way that microchip manufacturing techniques could be used to etch and encase many more electrodes than currently used into soft, waterproof strips of silicon. The increase in electrode density boosts the chances of detecting smaller signals and the encasement lengthens the life of a device by, in part, reducing the salt corrosion seen with today’s devices.

The key to the new device, they argued, was redesigning circuits to record changes in neural signals through something called capacitive interface, or the charge that builds up between the surface of the device and the brain. As with computer chips, these circuits can be largely etched into silicon and do not need the bulky parts used to run the traditional faradic sensing electrodes.

In preliminary experiments, the authors provide support for this design by showing that small versions of the new capacitive devices can detect a rat brain’s response to a sound as well as traditional faradic devices and for longer periods of time. Four out of five devices worked as long as 200 to 400 days.

Further results showed that larger versions of the Neural Matrix can be made for bigger brains, including our own. These devices contained just over 1000 electrodes, which is about three to four times more than existing systems and were packed at a density that was anywhere from 10 to 100 times greater than the other devices. Experiments with non-human primates suggested that these versions were highly sensitive and could pick up subtle changes in brain activity associated with different aspects of reaching for an item.

Will the Neural Matrix be as revolutionary as the computer chip?

As the authors state, it still needs to be tested in humans. Nevertheless, they conclude that it provides a blueprint for the next generation of devices which could improve the way doctors diagnose epilepsy, lead to better brain computer interfaces for people living with paralysis, or have unexpected applications for treating a variety of brain disorders.

Article:

Chiang, C.H., Won, S.M., Orsborn, A.L., Yu, K.J., et al., Development of a neural interface for high-definition, long-term recording in rodents and nonhuman primates. Science Translational Medicine, April 8, 2020 DOI: 10.1126/scitranslmed.aay4682

These studies were supported by the National Institutes of Health (NS099697); the National Science Foundation (CCF1422914, CCF-1564051); the Defense Advanced Research Programs Agency (DARPA-BAA-16-09, DARPA-BAA-13-20); a Steven W. Smith Fellowship; and a L’Oreal USA for Women in Science Fellowship.

For more information:

The National Institute of Neurological Disorders and Stroke

###

The NIH BRAIN Initiative® is managed by 10 institutes whose missions and current research portfolios complement the goals of the BRAIN Initiative: National Center for Complementary and Integrative Health, National Eye Institute, National Institute on Aging, National Institute on Alcohol Abuse and Alcoholism, National Institute of Biomedical Imaging and Bioengineering, Eunice Kennedy Shriver National Institute of Child Health and Human Development, National Institute on Drug Abuse, National Institute on Deafness and Other Communication Disorders, National Institute of Mental Health, and National Institute of Neurological Disorders and Stroke.

NINDS is the nation’s leading funder of research on the brain and nervous system. The mission of NINDS is to seek fundamental knowledge about the brain and nervous system and to use that knowledge to reduce the burden of neurological disease.

About the National Institutes of Health (NIH): NIH, the nation's medical research agency, includes 27 Institutes and Centers and is a component of the U.S. Department of Health and Human Services. NIH is the primary federal agency conducting and supporting basic, clinical, and translational medical research, and is investigating the causes, treatments, and cures for both common and rare diseases. For more information about NIH and its programs, visit http://www.nih.gov.